Domain Adaptation of Virtual and Real Worlds for Pedestrian Detection

2013·

David Vázquez

Abstract

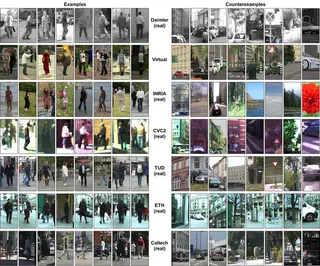

Pedestrian detection is of paramount interest for many applications, e.g. Advanced Driver Assistance Systems, Surveillance and Media. Most promising pedestrian detectors rely on appearance-based classifiers trained with annotated samples. However, the required annotation step represents an intensive and subjective task when it has to be done by persons. Therefore, it is worth to minimize the human intervention in such a task by using computational tools like realistic virtual worlds, where precise and rich annotations of visual information can be automatically generated. Nevertheless, the use of this kind of data generates the following question: can a pedestrian appearance model learnt with virtual-world data work successfully for pedestrian detection in real-world scenarios?. To answer this question, we conducted different experiments that suggest that classifiers based on virtual-world data can perform well in real-world environments. However, it was also found that in some cases these classifiers can suffer the so called dataset shift problem as real-world based classifiers does. Accordingly, we have designed a domain adaptation framework, V-AYLA, in which we have explored different techniques to collect a few pedestrian samples from the target domain (real world) and combine them with many samples of the source domain (virtual world) in order to train a domain adapted pedestrian classifier. V-AYLA reports the same detection performance as the one obtained by training with human-provided pedestrian annotations and testing with real-world images from the same domain. Ideally, we would like to adapt our system without any human intervention. Therefore, as a first proof of concept we proposed the use of an unsupervised domain adaptation technique that avoids human intervention during the adaptation process. To the best of our knowledge, this is the first work that demonstrates adaptation of virtual and real worlds for developing an object detector. We also assess a different strategy to avoid the dataset shift that consists in collecting real-world samples and retrain with them, but in such a way that no bounding boxes of real-world pedestrians have to be provided. We show that the generated classifier is competitive with respect to the counterpart trained with samples collected by manually annotating pedestrian bounding boxes. The results presented on this Thesis not only end with a proposal for adapting a virtual-world pedestrian detector to the real world, but also it goes further by pointing out a new methodology that would allow the system to adapt to different situations, which we hope will provide the foundations for future research in this unexplored area.

Type

Publication

PhD Thesis, Universitat Autònoma de Barcelona