Semantic Segmentation of Urban Scenes via Domain Adaptation of SYNTHIA

2017·,,,,,,,,,,

German Ros

Laura Sellart

Gabriel Villalonga

Elias Maidanik

Francisco Molero

Marc Garcia

Adriana Cedeño

Francisco Perez

Didier Ramirez

Eduardo Escobar

Others

Abstract

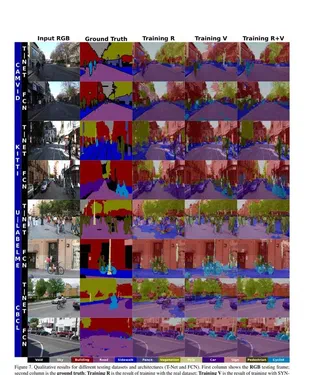

Vision-based semantic segmentation in urban scenarios is a key functionality for autonomous driving. Recent revolutionary results of deep convolutional neural networks (CNNs) foreshadow the advent of reliable classifiers to perform such visual tasks. However, CNNs require learning of many parameters from raw images; thus, having a sufficient amount of diverse images with class annotations is needed. These annotations are obtained via cumbersome, human labor which is particularly challenging for semantic segmentation since pixel-level annotations are required. In this chapter, we propose to use a combination of a virtual world to automatically generate realistic synthetic images with pixel-level annotations, and domain adaptation to transfer the models learned to correctly operate in real scenarios. We address the question of how useful synthetic data can be for semantic segmentation – in particular, when using a CNN paradigm. In order to answer this question we have generated a synthetic collection of diverse urban images, named SYNTHIA, with automatically generated class annotations and object identifiers. We use SYNTHIA in combination with publicly available real-world urban images with manually provided annotations. Then, we conduct experiments with CNNs that show that combining SYNTHIA with simple domain adaptation techniques in the training stage significantly improves performance on semantic segmentation.

Type

Publication

Domain Adaptation in Computer Vision Applications, Springer