A Multimodal Class-Incremental Learning Benchmark for Classification Tasks

2024·,,,

Marco D'Alessandro

Enrique Calabrés

Mikel Elkano

David Vázquez

Abstract

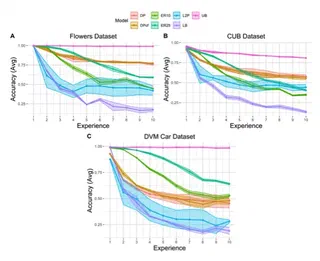

Continual learning has made significant progress in addressing catastrophic forgetting in vision and language domains, yet the majority of research has treated these modalities separately. The exploration of multimodal continual learning remains sparse, with a few existing works focused on specific applications like VQA, text-to-vision retrieval, and incremental multi-tasking. These efforts lack a general benchmark to standardize the evaluation of models in multimodal continual learning settings. In this paper, we introduce a novel benchmark for Multimodal Class-Incremental Learning (MCIL), designed specifically for multimodal classification tasks. The benchmark comprises a curated selection of multimodal datasets tailored to classification challenges. We further adapt a widely used Vision-Language model to multiple existing continual learning strategies, providing crucial insights into the behavior of vision-language models in incremental classification tasks. This work represents the first comprehensive framework for MCIL, establishing a foundation for future research in multimodal continual learning.

Type

Publication

Workshop at Advances in Neural Information Processing Systems (NeurIPS)