Elektra Autonomous Vehicle

Elektra

A multidisciplinary autonomous driving research platform

Elektra is an autonomous driving research platform born at the Computer Vision Centre (CVC) at the Universitat Autònoma de Barcelona. I co-conceived and coordinated the project from the ground up: identifying the vehicle, leading the drive-by-wire transformation, assembling the consortium, and staying involved across every technical workstream. My primary expertise was perception, and the project was co-led with my supervisor Antonio López. Together we brought together more than 20 researchers across seven institutions, dozens of students, and industry partners including NVIDIA, who sponsored us with GPUs, cameras, and a DRIVE PX platform. Our embedded pedestrian detector won Best Poster at NVIDIA GTC 2016 in the USA. Elektra became the reference autonomous driving hub in Catalonia, attracting media coverage and becoming a landmark research project in the region.

Project Overview & Consortium

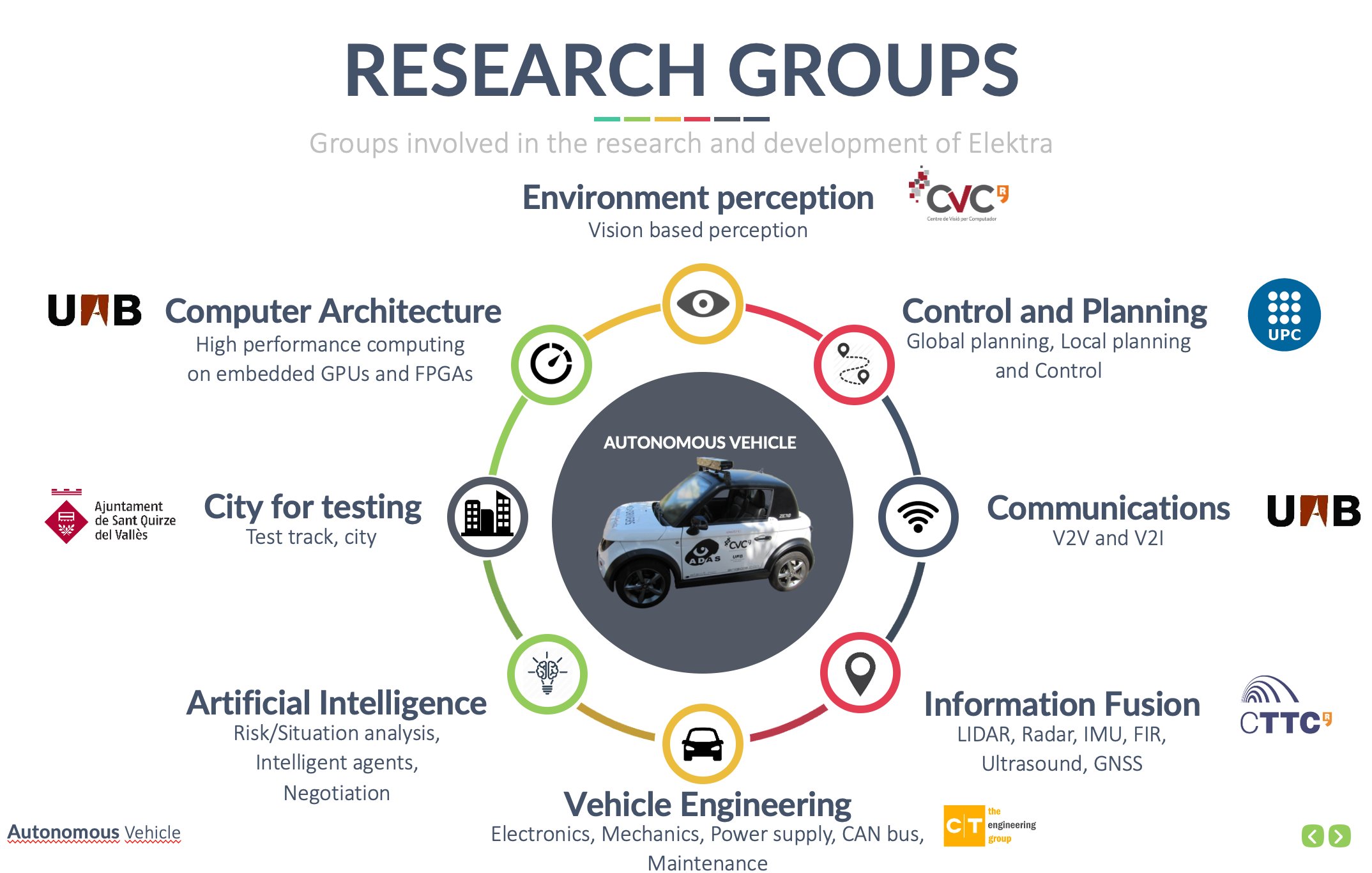

Elektra was conceived as the Catalan hub for autonomous driving research, bringing together expertise from computer vision, embedded hardware, control theory, communications, electronics, and vehicle engineering under a single collaborative platform. The project aimed to bridge the gap between fundamental research and real-world intelligent mobility, with technology transfer as a core ambition.

The consortium brought together seven institutions and the municipality of Sant Quirze del Vallès, which provided the test track and city environment for real-world validation. CVC/UAB led environment perception via computer vision; CAOS/UAB handled embedded hardware and high-performance computing on GPUs and FPGAs; UPC Terrassa developed control and planning; CTTC/UPC contributed positioning and sensor fusion (LIDAR, Radar, IMU, GNSS); UAB/DEIC covered vehicle communications (V2V and V2I); UAB/CEPHIS managed vehicle electronics and drive-by-wire; CT Ingenieros handled vehicle engineering, mechanics, and CAN bus; and the Ajuntament de Sant Quirze del Vallès provided the test track and city testing environment.

The Vehicle

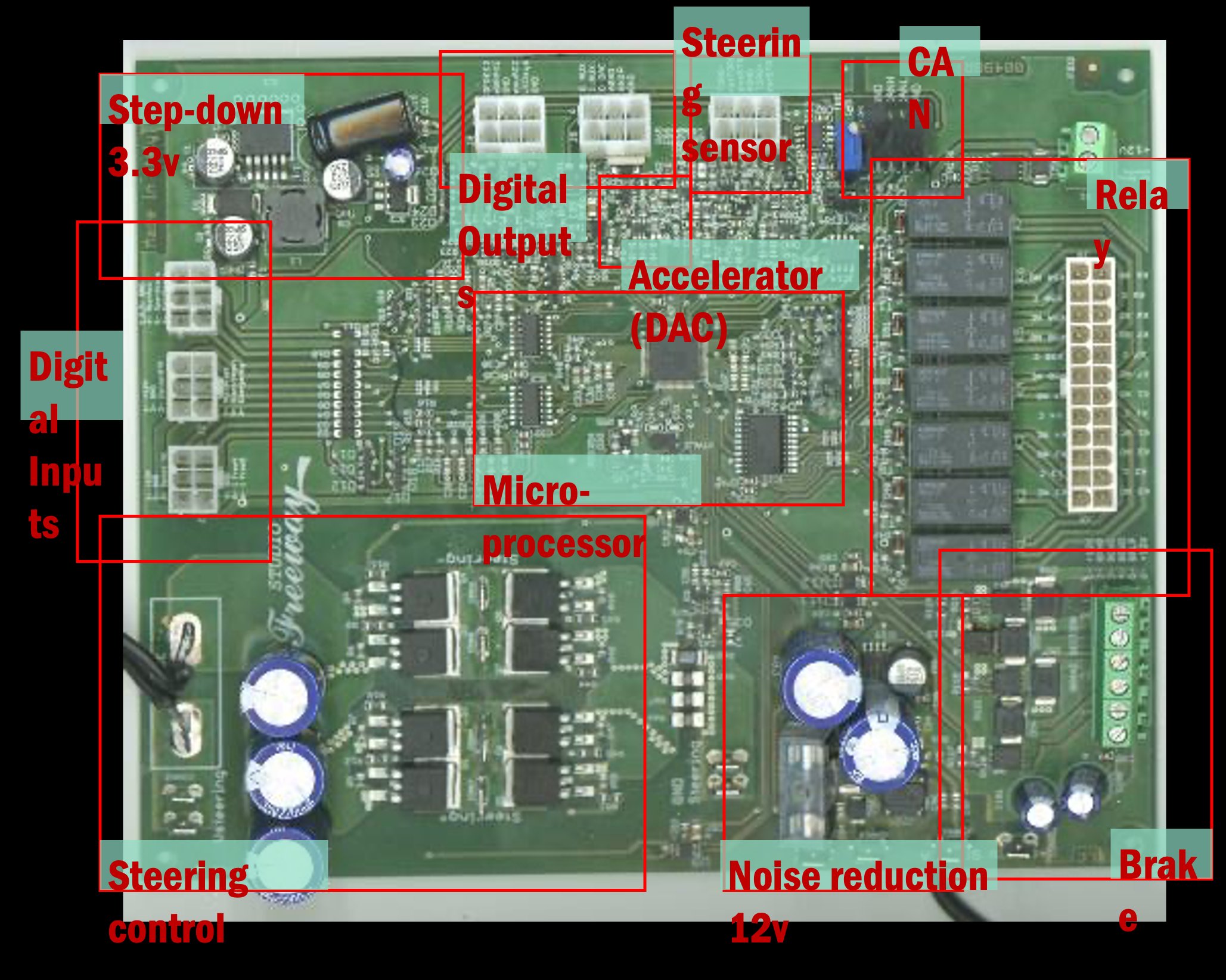

Elektra is built on a Tazzari Zero electric microcar, chosen for its compact size, electric drivetrain, and suitability for urban driving research. One of the first and most critical steps of the project was transforming a standard production vehicle into a fully drive-by-wire platform: every actuator — throttle, steering, and brakes — was interfaced electronically so that a computer could issue commands directly over a CAN bus connection.

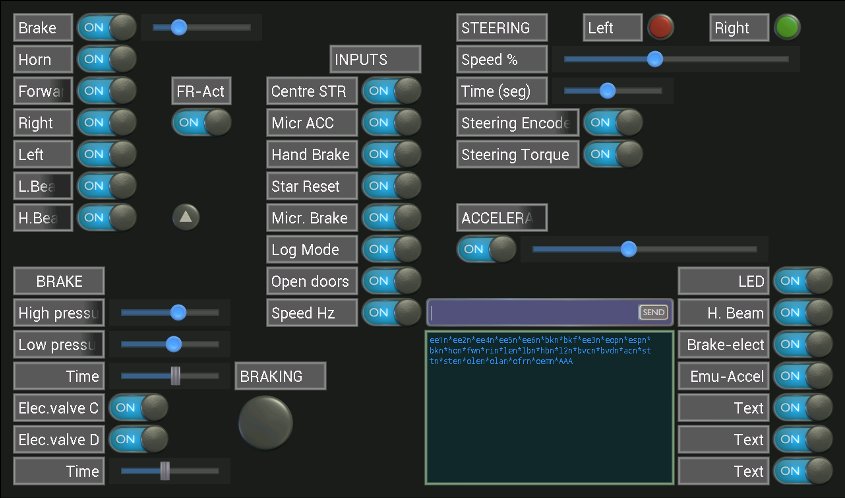

The custom electronics board integrates a microprocessor, digital inputs and outputs, a steering sensor, accelerator DAC, brake control, noise reduction circuitry, and relay systems, giving the software stack full authority over the vehicle's motion while preserving manual override for safety.

The vehicle was equipped with a purpose-built sensor and computing stack. Additional battery packs in the front trunk powered the onboard computers. A server in the rear trunk housed the GPUs, IMU, and GPS receiver. A roof-mounted rack carried a custom stereo camera pair and the GPS antenna, forming the primary sensing suite for perception and localization.

For testing, Elektra had access to a dedicated track in Sant Quirze del Vallès, a scaled replica of the Catalonian Formula 1 circuit, providing a controlled environment for autonomous driving experiments before deployment on real urban streets in Barcelona.

Perception

Perception is the foundation of autonomous driving: the vehicle must understand its surroundings before it can make any decision. At Elektra, perception was the core expertise of the CVC team and the area where the project made its most significant research contributions.

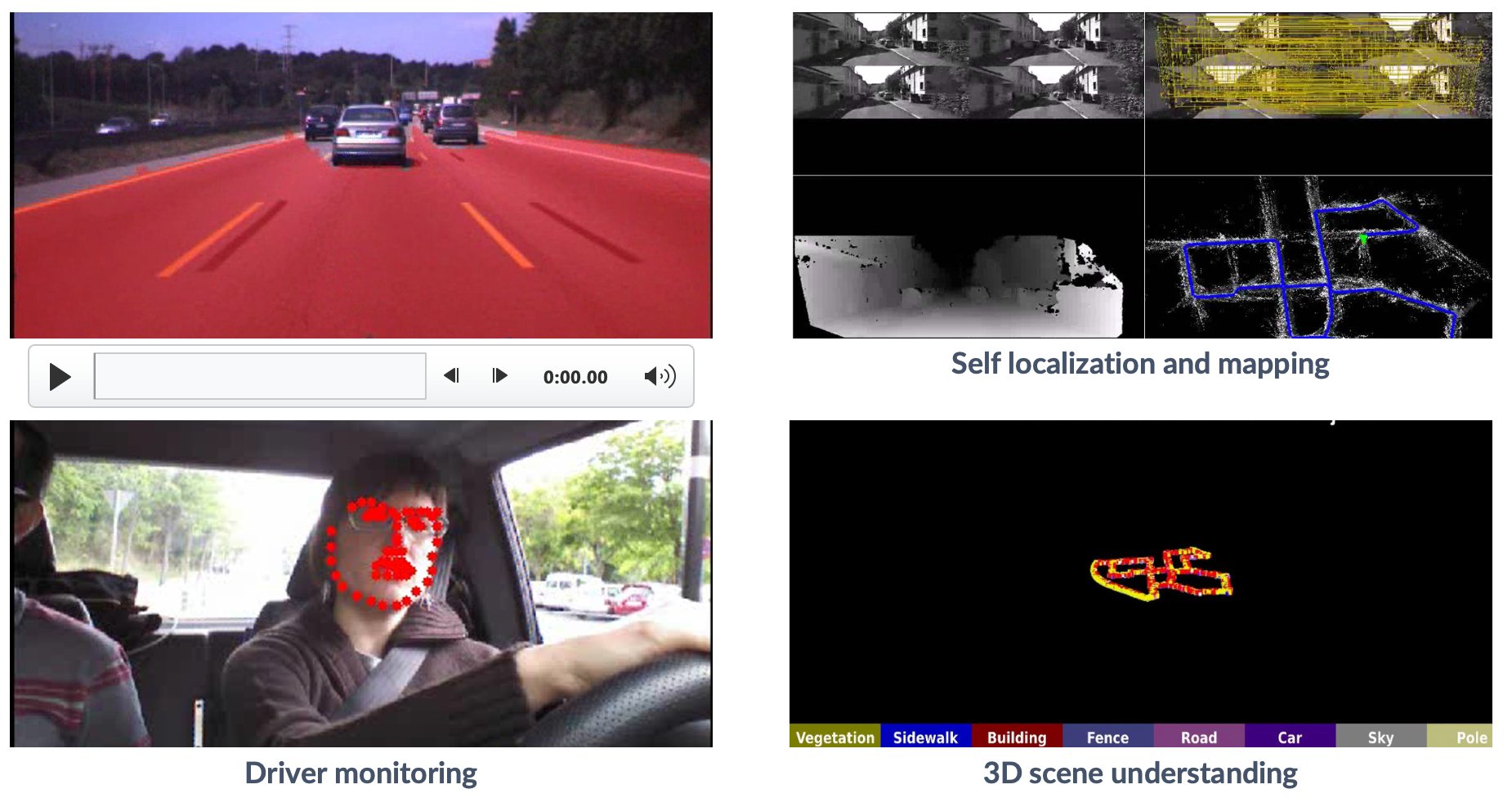

The primary sensing modality is a custom stereo camera pair, which provides dense 3D information about the driving scene. From this, we developed a full perception pipeline covering obstacle detection, free space estimation, semantic scene understanding, and driver monitoring.

Obstacle and pedestrian detection was a central research thread, with detectors running on onboard cameras in urban Barcelona streets, from drone footage, and from train-mounted cameras. A pioneering result was our virtual world pedestrian detector presented at CVPR 2010, the first pedestrian detector trained entirely on video game imagery, foreshadowing the synthetic data revolution that would follow years later.

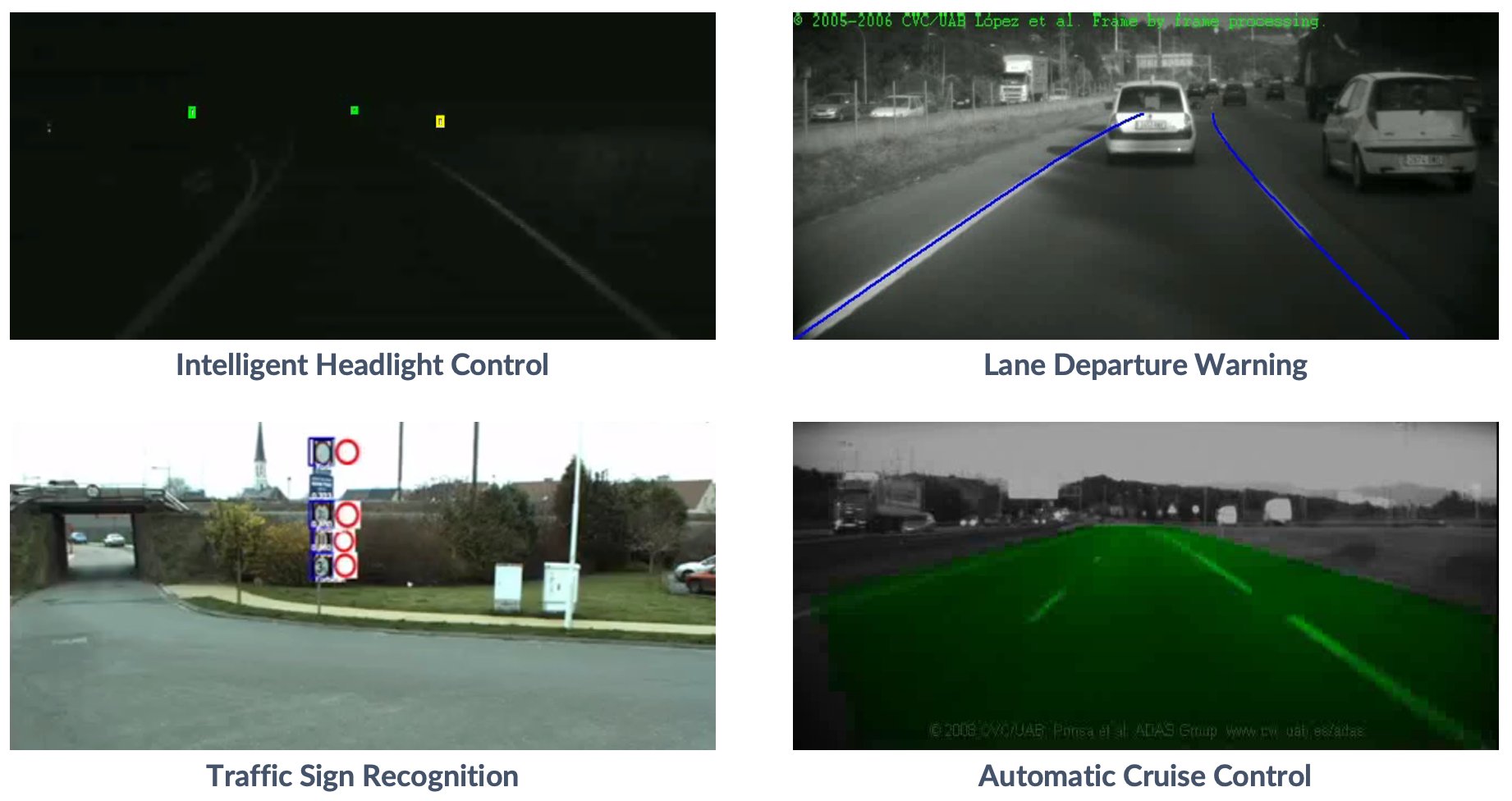

Road and free space detection identifies the drivable area in real time using semantic segmentation, while traffic sign recognition detects and classifies road signs automatically. 3D scene understanding combines object detection, depth estimation from stereo, and semantic segmentation into a unified representation. The Stixels representation provides a compact and efficient obstacle map particularly suited for real-time processing.

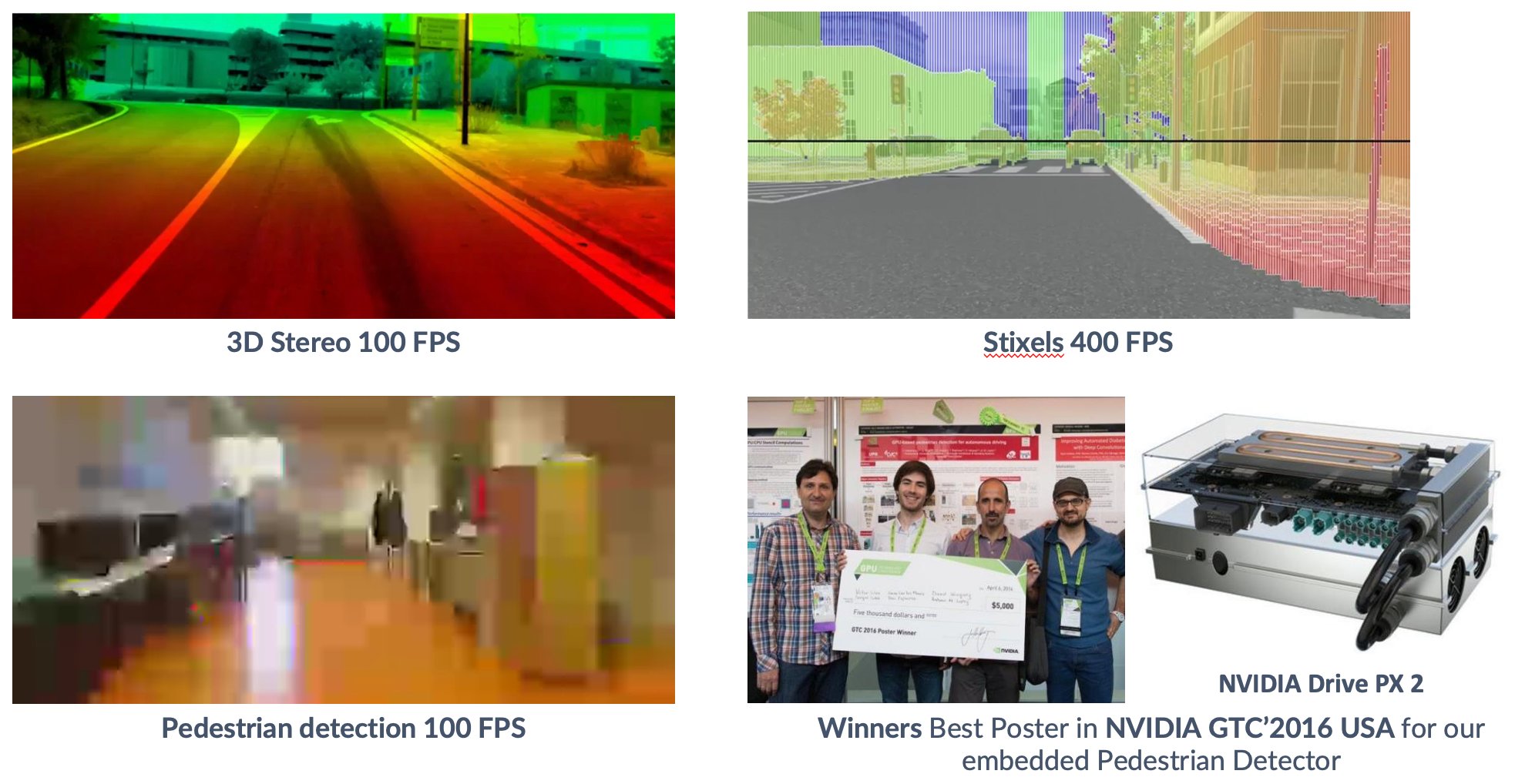

All of these algorithms were implemented to run at real-time speeds on embedded hardware. Using NVIDIA GPUs and FPGA boards, we achieved stereo depth at 100 FPS, Stixels at 400 FPS, and pedestrian detection at 100 FPS on the NVIDIA DRIVE PX platform. This work won Best Poster at NVIDIA GTC 2016 in the USA.

ADAS features built on top of this perception stack include lane keeping assist, intelligent headlight control, and adaptive cruise control.

Localization

For an autonomous vehicle to navigate safely, it must know precisely where it is at all times. Elektra's localization system fuses complementary sensing modalities to achieve robust and continuous position estimation in urban environments.

The primary approach combines GPS and IMU data through sensor fusion, providing a global position reference with inertial corrections for smooth trajectory estimation. This is complemented by Visual Simultaneous Localization and Mapping (SLAM), which uses the onboard cameras to build a map of the environment while simultaneously tracking the vehicle's position within it, enabling localization even in areas where GPS signal is unreliable.

Together these modalities give Elektra a robust sense of where it is, where it has been, and how to relate its perception outputs to a consistent world coordinate frame.

Planning & Control

Once the vehicle knows its environment and its position within it, it must decide where to go and how to get there. Elektra's planning and control stack translates perception and localization outputs into smooth, safe vehicle motion.

Global planning defines a route from point A to point B across the road network. Local planning operates at a finer scale, continuously adapting the trajectory to account for dynamic obstacles, road geometry, and traffic conditions detected by the perception system. Together they produce a motion plan that the low-level control layer then executes.

The control interface provides direct electronic authority over throttle, steering, and braking via the CAN bus. A custom software dashboard allows engineers to monitor and tune every actuator in real time, including brake pressure, steering torque, acceleration curves, and speed profiles. Safety mechanisms such as the autonomous emergency braking system were validated extensively on the test track before any on-road deployment.

The system was also used to demonstrate imitation learning, where the vehicle learned to drive by observing human driving behavior, and full end-to-end autonomous driving on real urban streets in Barcelona.

Autonomous Driving Simulation

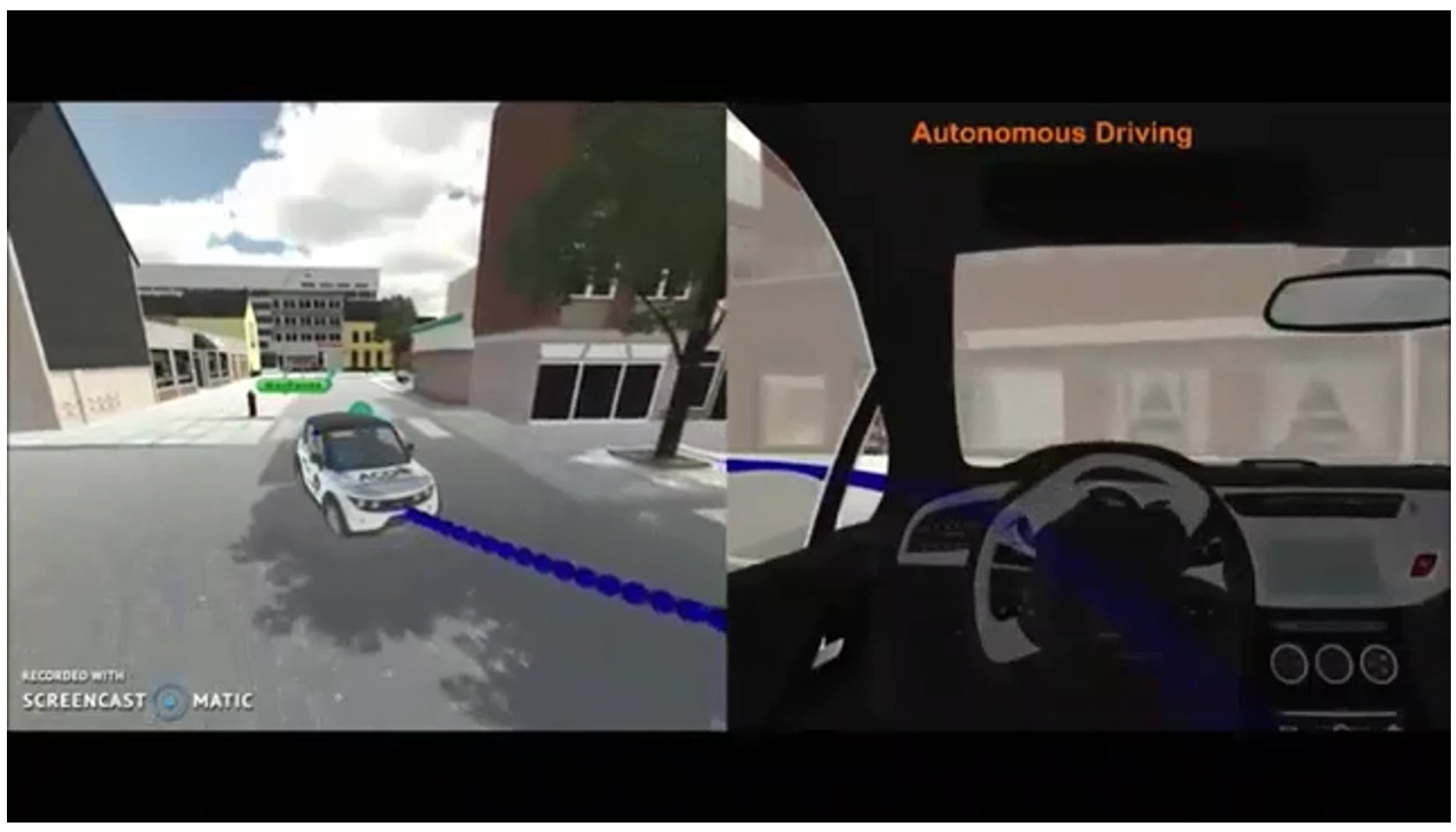

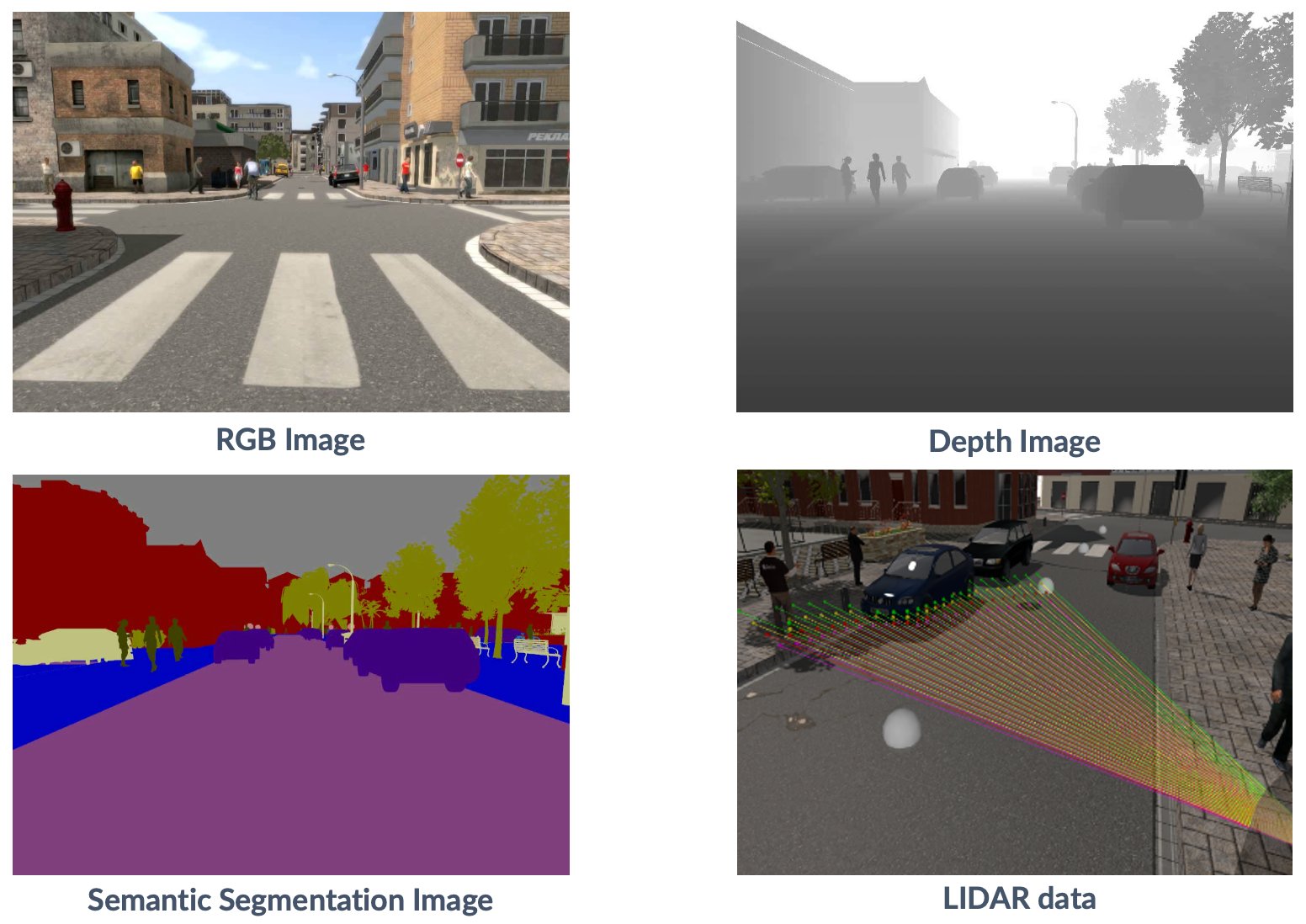

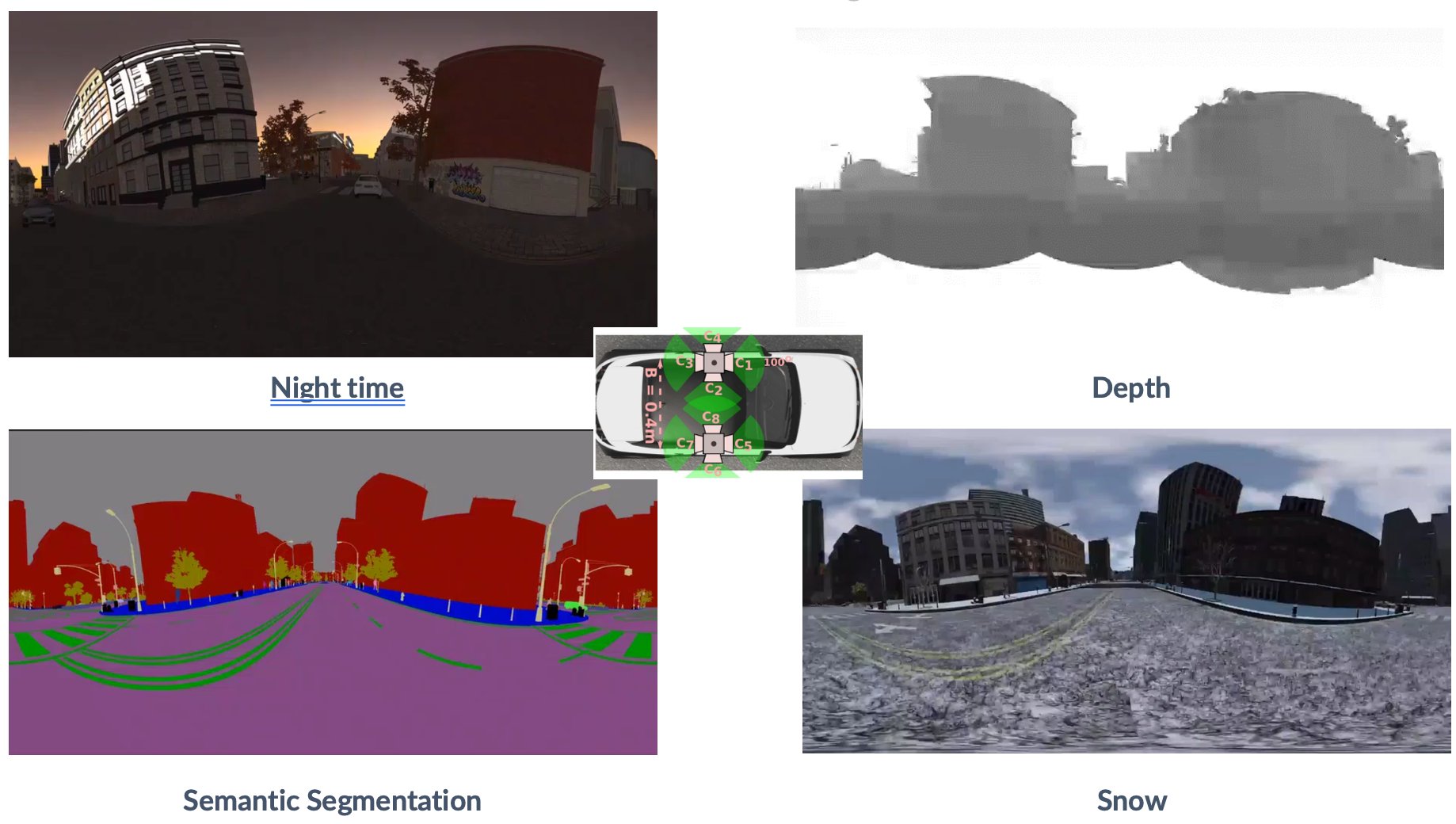

Training and testing autonomous driving algorithms on a real vehicle is expensive, slow, and carries safety risks. To overcome this, we developed SYNTHIA (SYNTHetic collection of Imagery and Annotations), a virtual urban environment purpose-built for autonomous driving research that became a major research contribution in its own right.

SYNTHIA generates photo-realistic urban driving scenes with automatically produced ground truth annotations for every pixel: depth maps, semantic segmentation labels, and multi-camera views including 360-degree panoramas. Crucially, it covers a wide range of conditions including different times of day, weather (rain, snow, fog), and seasons, providing the diversity needed to train robust models.

Within the Elektra project, SYNTHIA served as a safe and scalable sandbox for developing and validating the full autonomous driving stack. Perception algorithms were pre-trained on synthetic data before transfer to the real vehicle. Planning and control policies were tested in simulation before real-world deployment. This sim-to-real methodology significantly accelerated development and reduced risk during on-road testing.

SYNTHIA was published at CVPR 2016 and has accumulated thousands of citations, becoming one of the most widely used synthetic datasets in the autonomous driving research community. See the SYNTHIA project page.

Communications

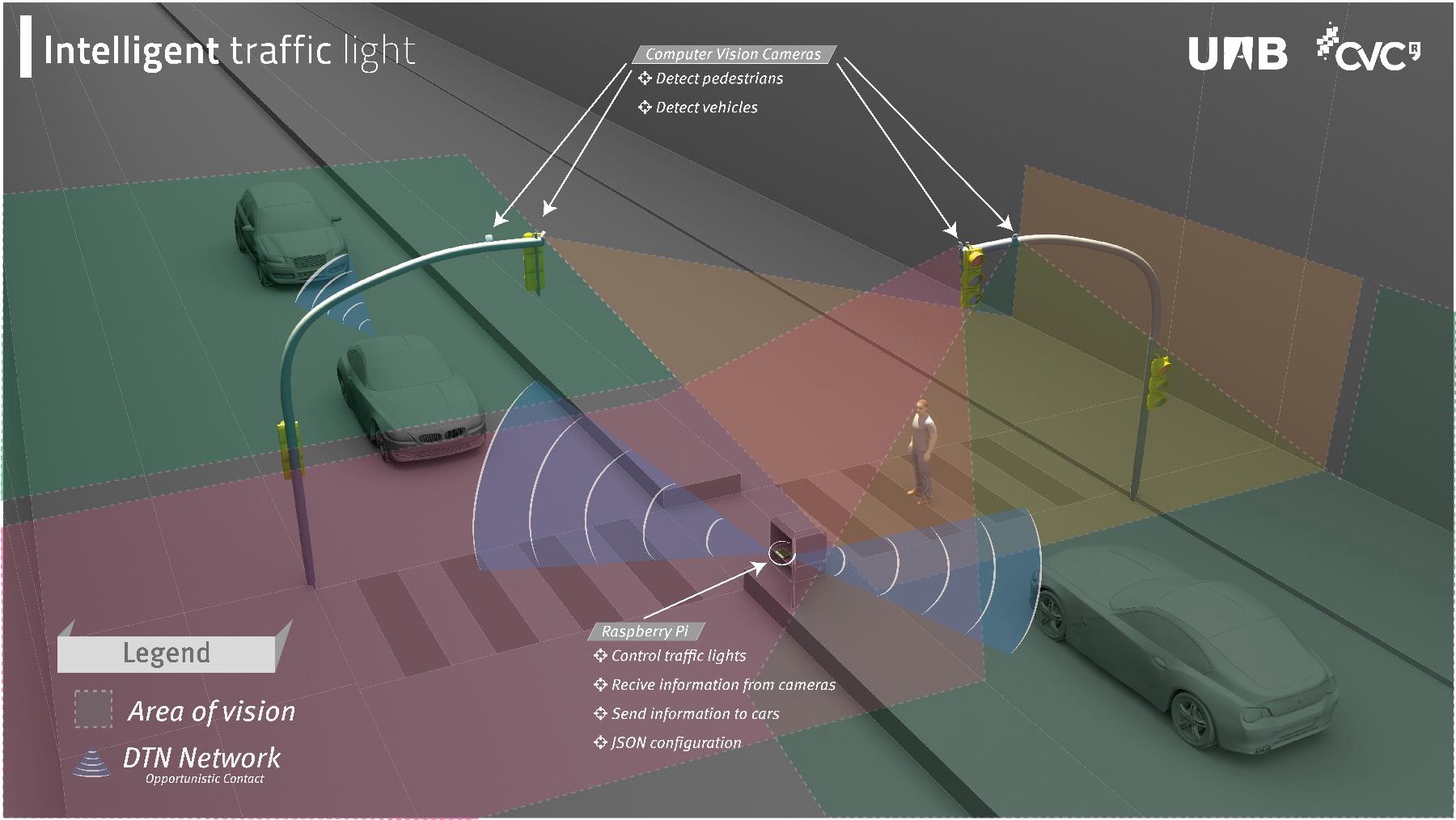

Beyond the vehicle itself, Elektra explored how autonomous vehicles can interact with road infrastructure through Vehicle-to-Vehicle (V2V) and Vehicle-to-Infrastructure (V2I) communication protocols.

The flagship demonstration of this workstream was an intelligent traffic light system. Camera-equipped traffic lights detect pedestrians and vehicles in their field of view and relay that information to a central Raspberry Pi controller, which adapts signal timing accordingly and broadcasts relevant information to approaching vehicles over a DTN (Delay-Tolerant Network) opportunistic contact protocol. This closed the loop between roadside perception and vehicle awareness, allowing Elektra to receive information about intersection conditions before even entering the junction.

This work illustrated how smart infrastructure and autonomous vehicles can cooperate to improve safety and traffic flow, a direction that remains highly relevant to modern intelligent mobility research.

Outreach & Publications

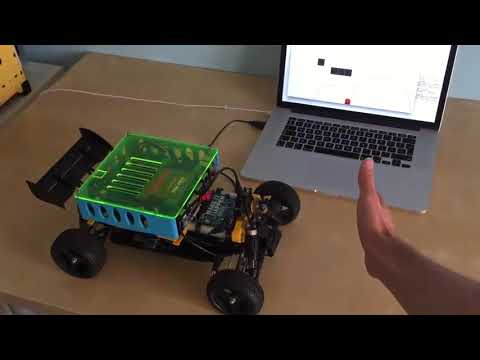

Elektra quickly became a reference project for autonomous driving research in Catalonia, attracting significant public interest and media attention. The vehicle was demonstrated at multiple public events, bringing autonomous driving technology directly to general audiences including families and school children. A particularly memorable outreach initiative saw high school students building their own autonomous driving system on a remote-control car, inspired by the Elektra platform and mentored by the CVC team.

The project received substantial recognition from the research community and industry. NVIDIA sponsored the project with GPUs, cameras, and a DRIVE PX 2 platform, and our embedded pedestrian detector won Best Poster at NVIDIA GTC 2016 in the USA. The SYNTHIA dataset, published at CVPR 2016, went on to become one of the most cited synthetic datasets in autonomous driving research. Pedestrian detection work from the project traces back to CVPR 2010, one of the earliest demonstrations of training detectors on synthetic data.

Over the course of the project the team produced a large body of peer-reviewed publications spanning perception, localization, planning, simulation, and embedded computing. A full list of publications is available on Google Scholar.